AI is everywhere, it seems, if you’ve used a search engine or social network in the last 8 years, you have had something experience with machine learning. Earlier this week Intel announced its first processor dedicated to AI (artificial intelligence) at an event in Haifa, Israel.

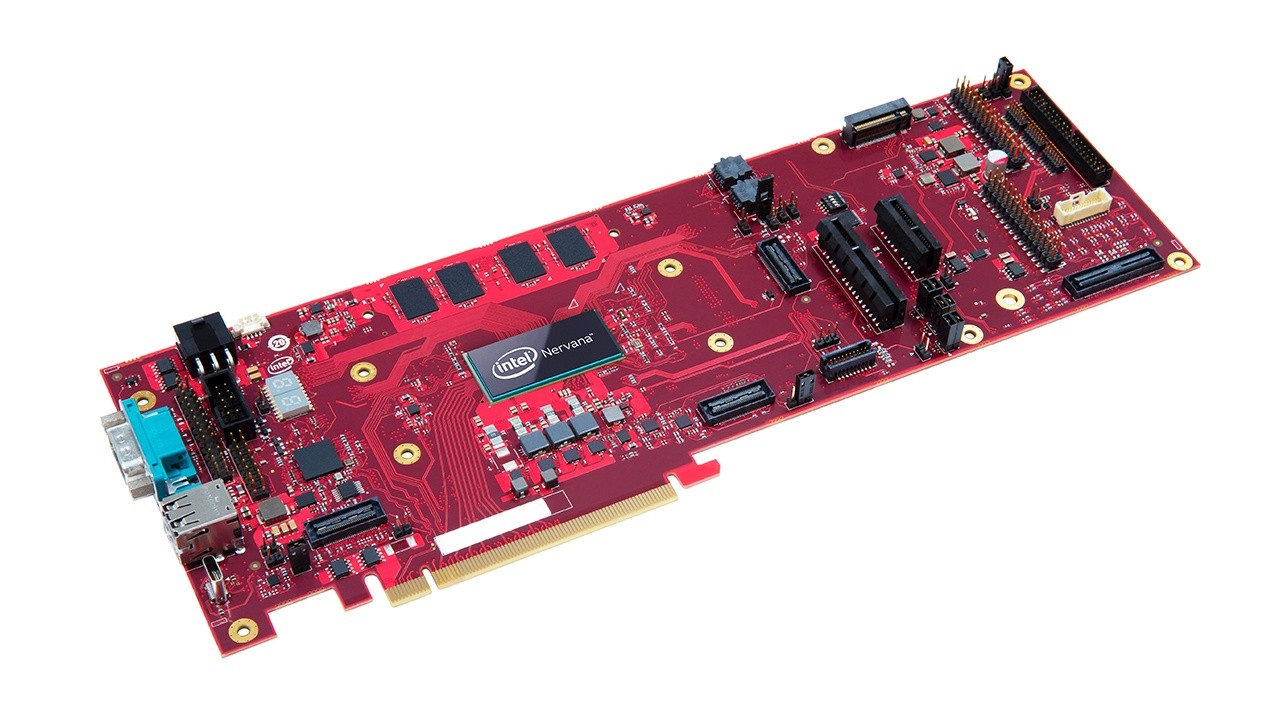

Developed at Intel’s labs in Haifa Nervana Neural Network Processor for Inference, also called “Springhill,”, is said to be designed for large data centers running AI workloads. It’s based on a slightly modified 10-nanometer Ice Lake processor to be able to handle intensive processing loads but uses only minimal amounts of energy, reported Reuters.

Intel said several customers, including Facebook., have already begun to use the chip in their data centers.

Intel has adopted an “AI everywhere” strategy and this Nervana NNP-I chip is just one component. The computing chip behemoth has gone for an approach that involves a mix of GPU’s, field-programmable gate arrays and application-specific customized integrated circuits for complex tasks in AI. Tasks that include creating neural networks geared for object recognition, speech translation, and executing a known as inference for trained models.

The Nervana NNP-I chips are built specifically for the later task. The chip is so small that it can be deployed to a data centers with an M.2 storage device made to slot into the motherboard on a normal M.2 port. The concept is to let Intel’s industry-standard Xeon processors offloaded inference workloads to focus on general computing tasks.

Intel said the Nervana NNP-I chip is a 10-nanometer Ice Lake die modified with two computing cores and the graphics engine stripped away to accommodate 12 Inference Compute Engines. These are designed to help speed up implementation of a neural network trained for tasks like speech and image recognition.

Currently, most inference workloads end up completed on a CPU even when accelerators like Nvidia’s T-Series offer better performance. It remains to be seen if Intel’s Springhill, when deployed via an M2 device, can effectively compete with more specialized GPU-based processor architectures.

I’m Daniel Payne. I’ve been a freelance writer, video, and web guy since 1988. My passion is technology, from the latest cameras to cutting edge ways the internet is used to improve medicine. I write for Internet News Flash and am helping with the online resurrection of Digital Content Creators Magazine